"It works on my machine."

Four words that have haunted software engineering since the dawn of personal computers. You'd think that by 2026, with Docker and Kubernetes and Nix and Dev Containers and an entire platform engineering movement, we'd have put this ghost to rest. We haven't.

A survey of over 650 engineering leaders and found that 67% of software teams still can't build and test their dev environment within 15 minutes. And more fuel: 72% of engineers say demands on their time make it hard to build new features, and they only spend 16% of their week actually writing code. A big chunk of the rest? Fighting tooling.

Here's the twist. The fix didn't come from DevOps. It came, almost by accident, from AI.

The cloud-first AI development tools that have exploded over the past year didn't set out to solve environment drift. Agents that spin up their own environments, do the work, and hand you a PR just solved it anyway, as a side effect of their architecture. And that accident might matter more than the code they write.

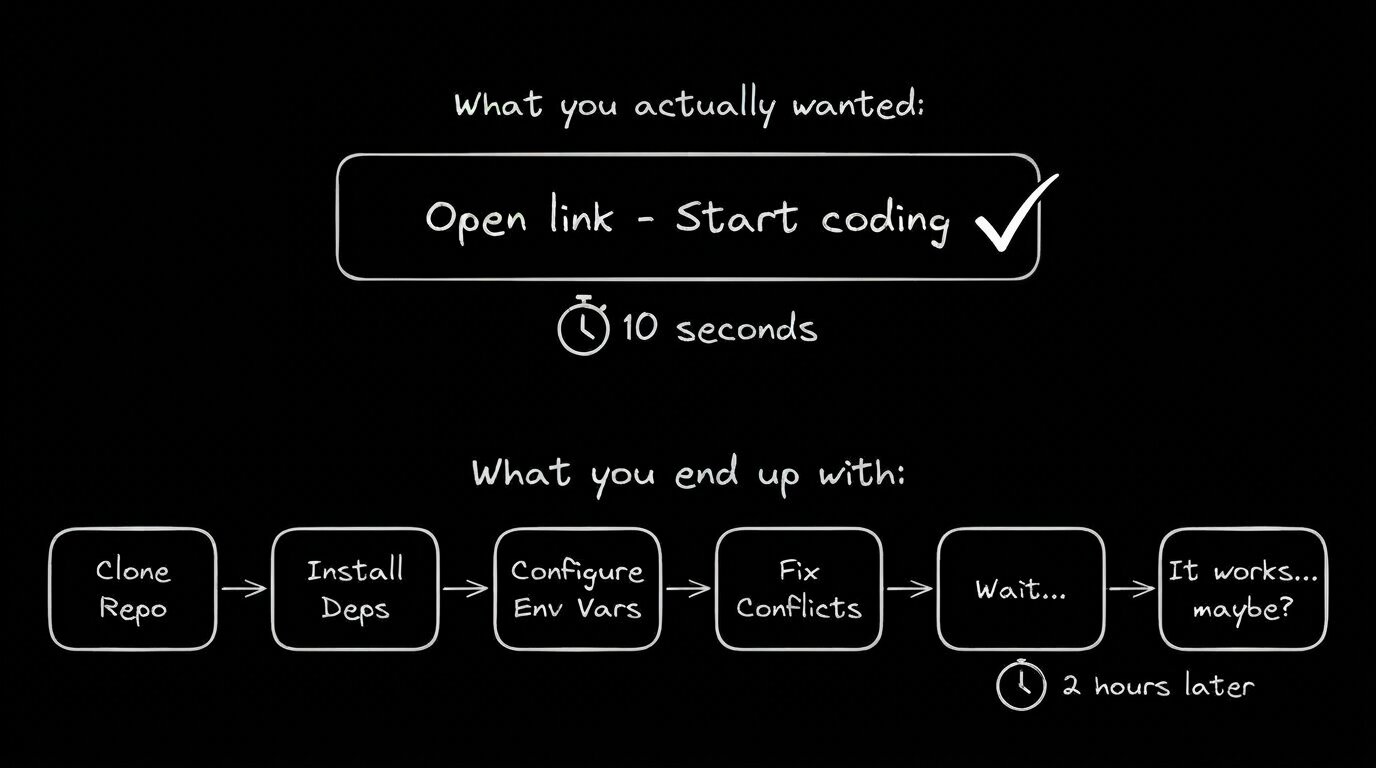

You know the drill. New project, new repo, new pain:

Clone. Install dependencies. Discover the README is three versions out of date. Manually configure environment variables. Realize someone's .env.example is missing half the keys. Fix a port conflict with the other project you forgot was running. Wait twelve minutes for npm install to finish. Pray.

And that's the happy path. The one where nothing fundamentally incompatible lurks in your system Python or your Node version or your shell configuration. The one where you don't spend a full afternoon learning that the project secretly requires a specific version of Postgres that conflicts with the one you already have.

This is the core tension of AI orchestration. When your environment is a bespoke snowflake, everything built on top of it inherits that fragility. And now there's something new built on top of it: AI agents.

The Anthropic 2026 Agentic Coding Trends Report frames a shift that most of us are already living: development is moving from writing code to orchestrating agents that write code. Developers now use AI in roughly 60% of their work.

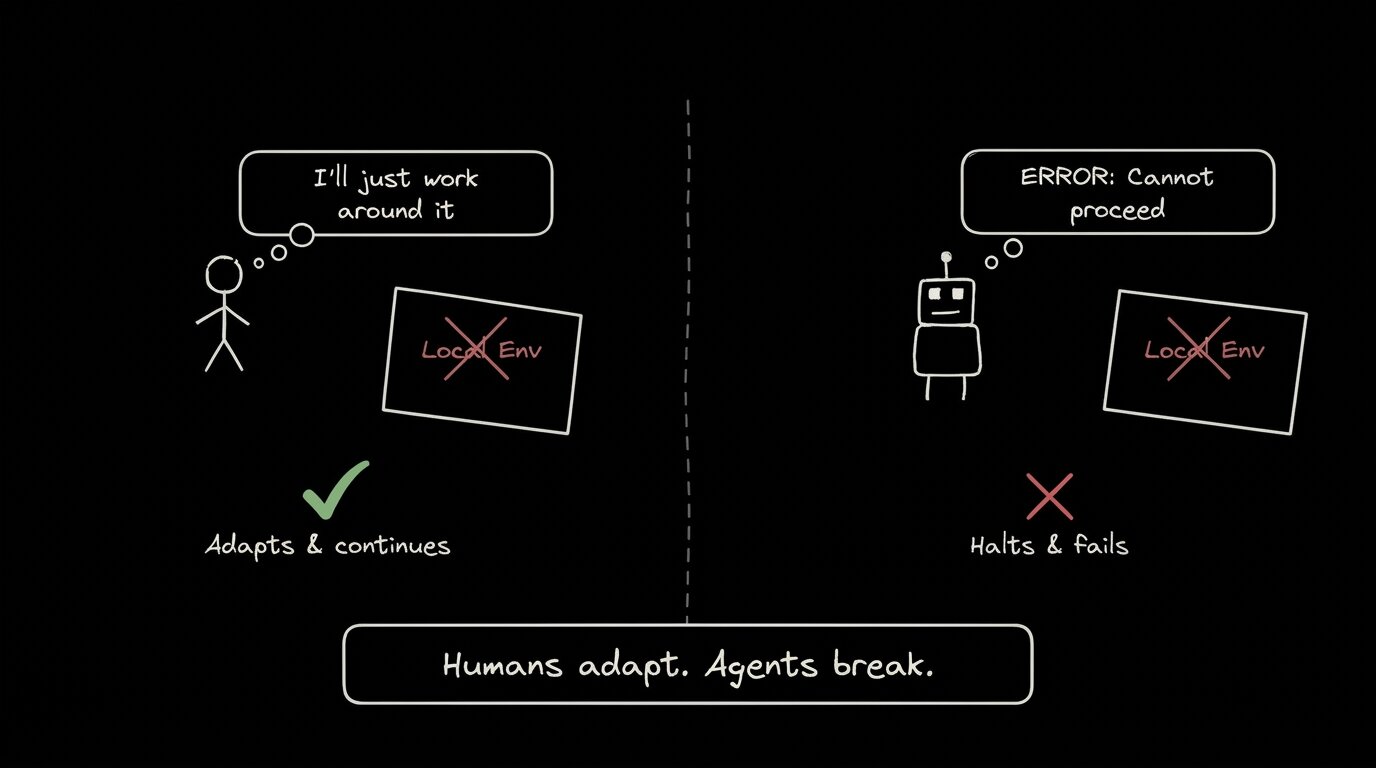

But here's the thing about agents. They're less forgiving than you are.

You, a human, can look at a failing npm install and think, "Oh right, I need to switch to Node 20 for this repo." You adapt. You context-switch. You work around it. An AI agent? It either hallucinates a fix that makes things worse, or it just stops. As Coder's VP of Product put it: "Asking an agent to operate in a janky local setup is like asking someone to learn to drive in a car where the steering wheel only sort of works sometimes."

The problem compounds with scale. When you're running multiple agents in parallel, which is increasingly how real work gets done, each agent inherits your local environment's quirks. Different tool installs across machines cause agents to produce different outputs for the same prompt. Parallel runs compete for ports, filesystem state, and memory. Reproducibility doesn't just drift. It evaporates.

If your environment is lying to you, you'll probably notice. If it's lying to your agents, you'll get confidently wrong code in a PR you might approve.

I see this all the time. The other day, my agent rewrote an import path to use a package alias that only existed in the local tsconfig, one that had drifted from the repo's canonical version months ago. The code looked perfectly fine. It passed the agent's own checks. It broke in CI. That's an hour of debugging for something that never should have been possible in the first place.

Containerization was the correct impulse. Docker, Nix, Dev Containers: these tools all recognized that environment consistency is a prerequisite for reliable software, not a nice-to-have.

The problem is that every one of these solutions adds something to your workflow:

- Docker: Dockerfiles to write and maintain, image sizes to manage, and on Mac, the perennial filesystem mount performance tax. It works. It also asks a lot of you.

- Nix: Technically beautiful for reproducibility. But the learning curve is steep enough to have its own subreddit support group.

- Dev Containers: Standardizes nicely, but requires VS Code (or compatible editors) and adds container startup time to every session.

- Platform engineering: The enterprise answer. Dedicated teams building internal developer platforms. Effective, if you can afford to staff it.

These are all bolt-on solutions. They layer consistency on top of local development. You still start with a local machine, and you add tooling to make that machine behave consistently. That's better than nothing. But it's not the same as making the problem disappear.

More importantly, every bolt-on solution requires someone to maintain it. Dockerfiles go stale. Nix flakes need updating. Dev Container configs drift. You're trading one maintenance burden for another, and now you have two things that can break instead of one.

Cloud-first AI coding tools like Builder, Claude Code, Cursor's background agents, or Devin didn't set out to fix "it works on my machine." They set out to make AI-powered development fast and accessible. But their architecture makes environment inconsistency structurally impossible.

Think about what happens when you use a cloud-based AI agent:

- You describe a task or assign an issue

- The agent spins up a fresh cloud environment: clean OS, correct dependencies, consistent tooling

- It does the work in that isolated container

- It opens a PR with the changes

- You review the diff

At no point does the agent touch your local machine. At no point does it inherit your .zshrc aliases, your stale Homebrew packages, or the rogue Python 2.7 that's been lurking in your PATH since 2019. You didn't configure anything. You didn't debug anything. You just described what you wanted and reviewed what came back.

This isn't a feature in it of itself. It's just a structural byproduct of moving execution to the cloud. Every run starts from the same clean slate. No leftover state, no version mismatches, no port conflicts. The "it works on my machine" problem doesn't get solved—it gets eliminated, because there's no longer a "my machine" in the equation.

The best AI coding tools of 2026 all share this pattern to varying degrees. Cloud execution isn't just a deployment convenience. It's an environment consistency guarantee that you get for free.

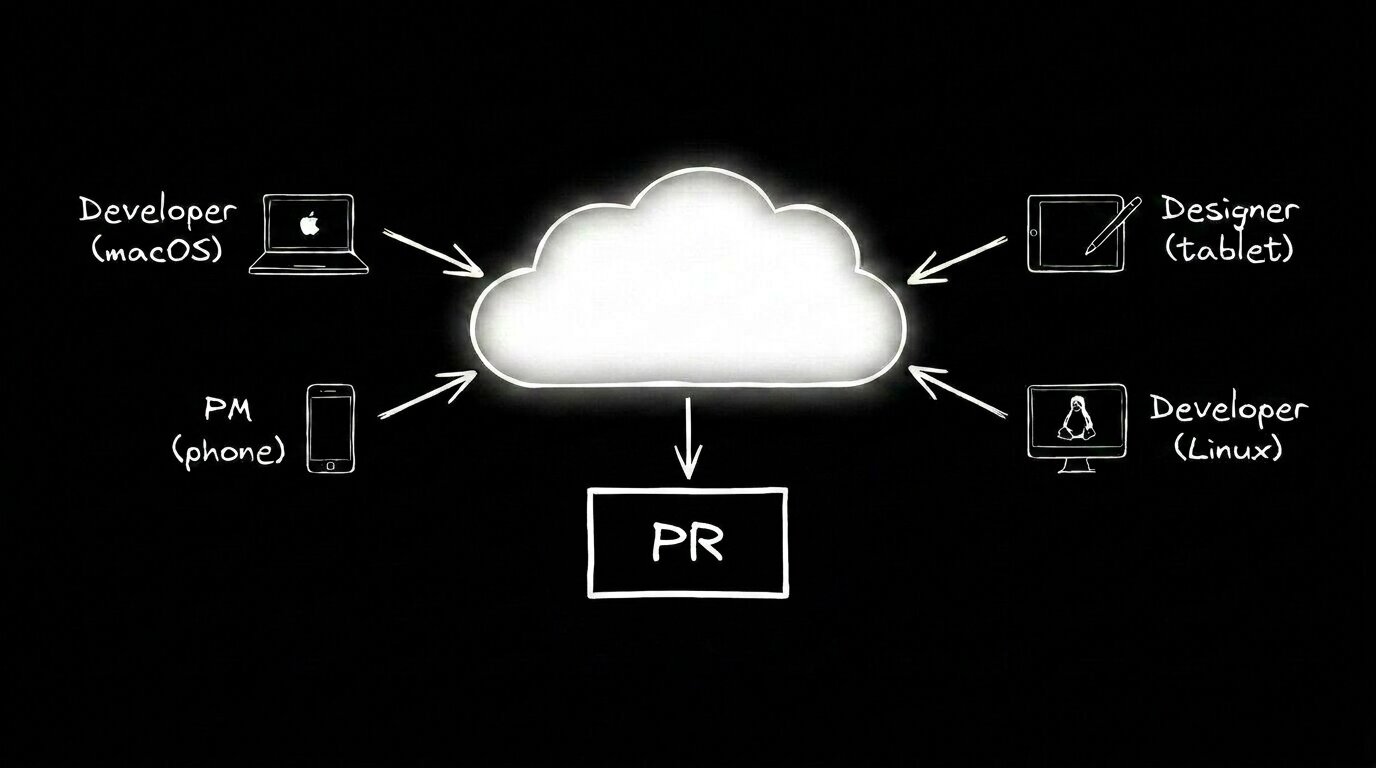

The implications go beyond just "no more env bugs." When there's no setup ritual, the barrier to contributing code drops to nearly zero. This is the shift I described in The AI Software Engineer in 2026: the developer as orchestrator, not the sole gatekeeper of a local environment that only they understand.

Onboarding gets faster. New hires don't spend their first three days fighting tooling. Open source contributors don't bounce off your project because your README assumes a specific OS. And the async bonus is real: fire off a task, close your laptop, get a notification when the PR is ready. Review it from your phone if you want. The cloud environment doesn't care what device you're on.

I work in developer experience, which mostly means marketing, community, and docs. My relationship to our codebase isn't "I'm assigned sprint tickets." It's "I notice things that are broken because I talk to developers all day."

Previously, "noticing something broken" meant filing a JIRA ticket, describing the issue, and waiting for eng to prioritize it. For small paper cuts, that often meant it never got fixed. The overhead of filing, triaging, and assigning a minor annoyance was bigger than the annoyance itself. So you just live with it. You mention it in a Slack thread, someone agrees it's annoying, and the thread dies.

Now that same Slack thread is the fix. I can tag the @Builder.io bot directly in the conversation where I'm already discussing the problem. A few minutes later I get a notification with a link to a live preview. I click through to a full dev environment where I can test the fix, poke around, and make further changes myself if I need to.

Sometimes I just confirm the paper cut is gone and approve. Sometimes I dig in and adjust things at the code level. The point is I can operate at whatever level of granularity the situation calls for, without anyone setting anything up for me.

The shift here isn't just speed. It's that the people closest to a problem can now fix it. I see community pain points every day that engineering will never prioritize, because they're small and there's always something bigger on the roadmap. Now those paper cuts actually get addressed, by the person who noticed them, in the conversation where they came up.

This pattern is everywhere at Builder. Designers submit PRs with clean one-line diffs for layout tweaks. PMs fully prototype their own feature ideas, and those prototypes become our real code. Engineers end up reviewing already-working implementations instead of translating Figma specs into code. The tedious handoff work is gone, and developers focus on the parts that actually need engineering judgment.

All of it runs on the same consistent cloud environment. No "which branch are you on." No "did you run npm install." That consistency makes everything else work.

The best developer tools don't ask you to fix your environment. They make the problem invisible.

Docker asked you to learn a new tool. Nix asked you to learn a new language. Platform engineering asked your company to hire a new team. AI coding agents didn't ask you anything. They just moved the environment to the cloud, and the problem went away.

That might be AI's most underrated contribution to developer experience. Not the code it writes. Not the PRs it opens. The fact that "it works on my machine" is finally, little-by-little, becoming irrelevant.

The industry spent two decades trying to solve environment consistency with more tooling. Turns out, the answer was to remove the environment from the equation entirely. Sometimes the best fix is just better architecture.