AI writes more code than your review process was designed to handle. Why every PR now needs an agent who opens your product and uses it.

For twenty-five years, software teams optimized around one assumption: code was expensive to write. That single fact shaped every process we built. Teams planned carefully, spec'd thoroughly, and designed before anyone touched a keyboard. By the time a developer started writing, every decision had been pressure-tested through meetings, documents, and design reviews. The cost of writing the wrong code was high enough to justify all that overhead.

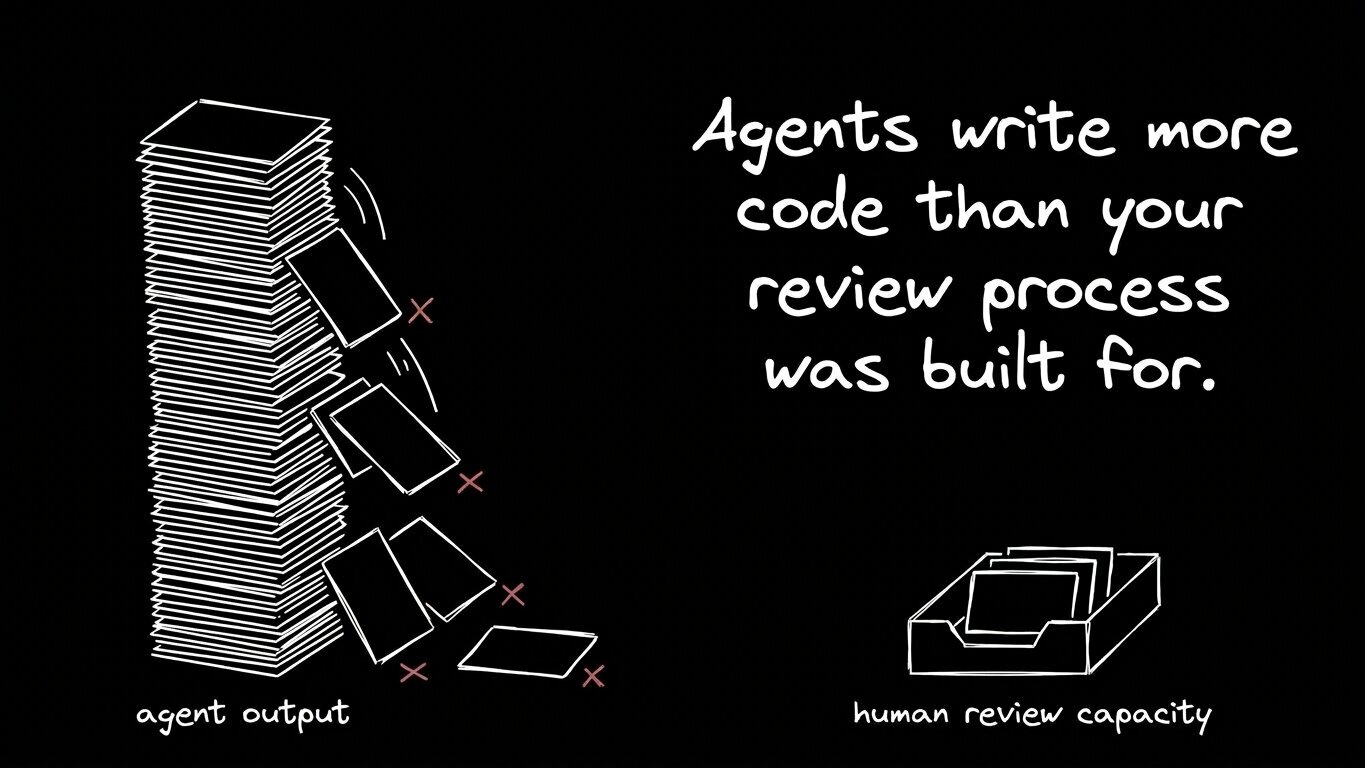

That assumption no longer holds. AI agents now turn ideas into working code in minutes. A bug report can be fixed before the standup ends. A PM's idea can become a working prototype before the spec gets written. In the most aggressive shops, agents open as many PRs in a week as the whole engineering team.

So the constraint moved. Writing code used to be the slow, expensive step in the lifecycle, and the cost of doing it has fallen close to zero. The slow step now is something that always took time and always will: knowing whether the code actually works.

When a human engineer writes a feature, they test it as they build. They clicked through the flow. They tried the edge cases. They knew, in their hands, that the thing worked before they pushed. Review was a second pair of eyes on a piece of code that already had a careful set of eyes.

Agent-generated code arrives at review without that step. The agent writes the diff and runs the unit tests. The product itself stays unopened. Nobody fills out the form. Nobody watches what happens when the network call fails. Nobody notices the empty state is broken. The code looks right. It compiles. The tests pass. Then a customer hits a bug nobody walked through.

This is the failure mode of the agent-native shift. The industry's response so far has been to add more code review at the diff layer. AI code reviewers read the diff. Coding assistants reason about the changes. These tools are real improvements, and most teams should use them. They cover the layer the human engineer used to cover by reading their own code, which means they catch the things a careful diff would catch.

What stays uncovered is everything that only shows up once the product runs. Buttons that don't fire, forms that submit empty, redirects that 404, error states that nobody designed. None of that shows up in a diff. It shows up when something uses the product in a real browser with real interactions, which is exactly the step that gets skipped when agents are doing the writing. The kind of thing nobody scripted because nobody thought it would break.

Every team has someone who clicks through every PR before it merges. Sometimes it's a QA engineer. Sometimes it's the developer who opened the PR. Sometimes it's whoever's free that day. The job is always the same: open the change, walk the flows, look for what broke.

That job worked when ten PRs landed a day. It struggles when a hundred do, and it falls apart when half of those PRs come from agents acting on tickets, Slack threads, and customer feedback the human reviewer never saw. Volume is one part of the problem. Context is another. The reviewer doesn't have what the agent had: the customer message in Slack, the ticket the PM filed, the conversation that led to the change. They have a diff, a description, and forty-five seconds before the next PR lands.

So most teams quietly let it slide. They write end-to-end test suites for the critical paths and hope the rest holds. They rely on customer bug reports as the real QA layer. They ship faster than they ever have and absorb a steady trickle of regressions as the cost of doing business in the AI era.

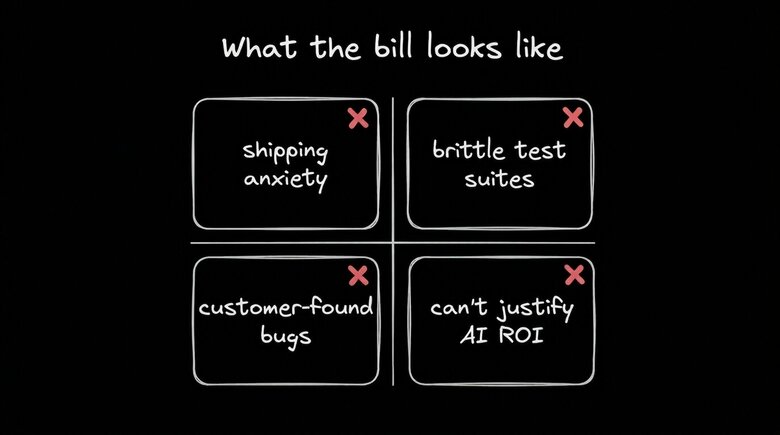

This is the bill for agent productivity coming due. Faster generation paired with the same review capacity means more behavioral coverage gets skipped on every PR, and the compounding effect is already visible: shipping anxiety on every frontend change, brittle test suites that nobody trusts, customer-found bugs creeping up, and engineering leaders who can't tell their CFO whether the productivity gains from AI are real or whether they're being paid back in support load and churn.

The natural response to this is to reach for what's already in the toolbox. Most teams have unit tests, integration tests, and end-to-end suites for critical flows. Many added an AI code reviewer in the last twelve months. A few have tried wiring up browser automation themselves, giving an agent the primitives to drive a browser and see what happens.

Every one of these layers carries weight in a modern codebase, and each has a defined edge for what it covers. The hole opens in the same place each time. Here's what each layer covers, and where it stops:

| Approach | Covers | Doesn't cover |

|---|---|---|

Unit and integration tests | Logic and contracts at the function level | Behavior in a real browser; flows nobody scripted |

Scripted end-to-end suites | Critical paths someone took the time to write | Edge cases nobody anticipated; flows added after the suite was written |

AI code reviewers | Issues visible in the diff itself | Anything that only surfaces when the product runs |

DIY browser automation | One developer's task, one prompt at a time | Coordinated coverage across a team's PRs |

The pattern is consistent. Each layer covers a slice of the problem, leaving a widening gap in behavioral coverage every time an agent opens a PR. Scripted suites only catch what someone took the time to script. Code review agents work at the wrong layer for the failure mode that matters now. DIY browser automation gives an agent the primitives to drive a browser, and turning that into coordinated coverage across a team's PRs means building flow inference, a severity policy, a replay viewer, and PR integration on top. Underneath all of it sits a question about engineering time: building QA infrastructure pulls that time away from shipping product, and most teams that go down that road find their bespoke version drifts out of sync with the rest of their stack within a quarter.

Something has to walk through the product on every PR. The volume of changes has exceeded what humans can absorb, which means something has to act as an agent.

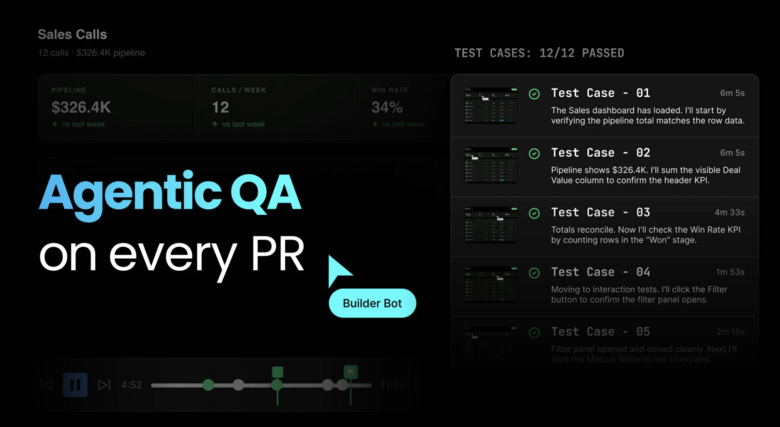

What that agent does sits at the behavior layer of the stack, one rung up from where diff-reading agents work today. It opens the product in a real browser. It clicks. It types. It walks the flows the change touches, and reports what broke, with enough evidence that a human reviewer can verify the finding in seconds. This is the layer that's been missing from agent-native development, and it's the layer we built Quality Review Agent to fill.

On every PR your team opens, Quality Review Agent spins up a real browser, loads your product, and uses it. It reads the PR title, description, and diff to figure out what changed, then walks the change end-to-end across three layers of coverage:

- Critical flows. The happy path for whatever the change touches. If a PR modifies a checkout step, the agent walks through the checkout process.

- Edge cases. Empty states, invalid input, rate limits, error paths. The boring failure modes that humans skip when they're tired, and the suite skips when nobody scripted them.

- Regressions. Whether this change broke anything nearby. A tweak to a dashboard filter re-tests the charts that depend on it.

When the run is complete, the agent posts what it found back to the PR, including a video replay, network calls, and console logs synced to the timeline. Every flagged bug comes with the receipts: a frame-by-frame replay showing exactly what broke, with the failed network request and the console exception at the right second. The reviewer scrubs to the moment it fired, sees the agent's reasoning at that step, and decides whether to merge or fix.

Code review and quality review cover different layers of the same PR, which is why teams need both to run. Both run in parallel, so the total latency tracks the slower of the two, and full behavioral and code-level coverage on every change comes without slowing the team down.

When a flagged bug comes back, anyone on the team can resolve it. Every finding has a "Fix in Builder" button that lets the person closest to the problem describe the fix in plain English, hand it to the agent, push the update to the same branch, and re-run. The PM who opened the ticket can fix the copy themselves. The designer who refined the layout can fix the spacing. The engineer reviewing the diff can fix the architecture. The work doesn't bottleneck on whoever happens to own the code path.

Agent-native development is still early enough that most of the conversation is about the generation side. Faster code, more PRs, more roles contributing. The trust side moves more slowly through the news cycle because the breakdown is slow, too. Regressions trickle in. Customer-found bugs creep up. Engineering leaders start hedging when the CFO asks about productivity gains. The compounding cost is real, showing up in support load and churn over months, in small recurring damage that rarely makes the headlines.

The teams that get ahead of this build the trust layer alongside the generation layer. A code review agent on every PR. A quality review agent on every PR. Both run in parallel, giving the human reviewer the receipts they need to merge or fix in seconds. The work that used to take a human reviewer an hour now takes them two minutes, and the work that used to be skipped entirely now happens by default.

This is the bet behind Quality Review Agent, and the bet behind the rest of Builder. The first wave of the platform was collaboration: putting designers, PMs, and engineers on the same branch, working from real code, with real components. Quality Review Agent is the next layer of the same thesis. Once a team is shipping code from across roles and across agents, trust becomes the live question on every PR. Did this work, in a real browser, the way a customer is going to use it? An agent doing the work humans used to do, at the volume the platform now produces, is how we answer that question on every PR.

The teams that win the AI-native era will be the ones shipping the most working software. With code generation as abundant as it is now, the deciding factor is whether all that generated work reaches users in working order. The trust layer is how we get there.

Check out the announcement on Builder’s Quality Review Agent, and sign up for free to try it on your own codebase.