When every role can prompt agents, validate in real time, and move work forward directly, the handoffs stop piling up. Here's what that looks like in practice.

When companies adopt AI coding tools, the workflow usually looks like this: developers gain access, individual contributor productivity increases, and delivery timelines remain flat. AI made developers faster at the one step they already owned, and left everything around that step exactly as it was.

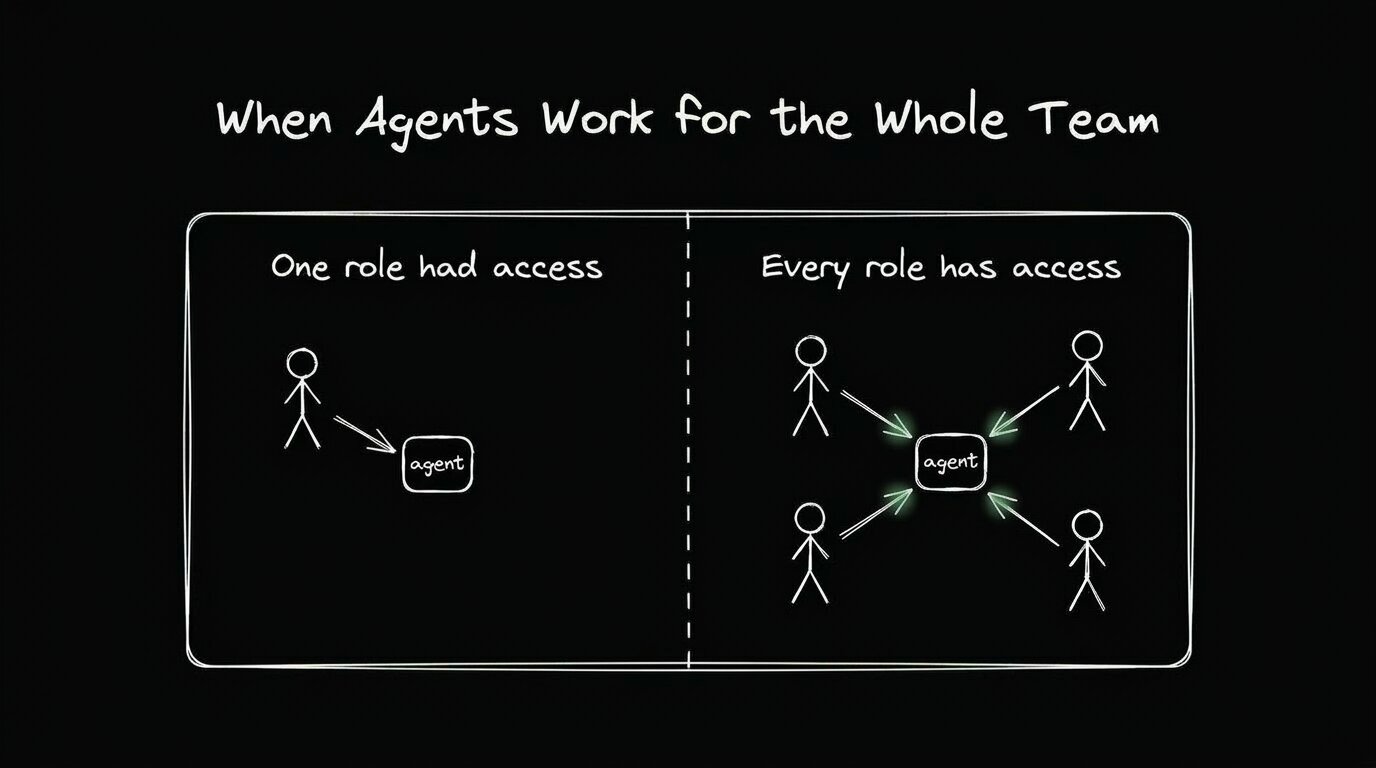

The teams closing the gap between AI promise and actual delivery throughput are taking a different approach. They're putting agents in the hands of the whole product team, not just the engineers.

The standard product workflow is sequential by design. A PM defines the work, a designer shapes it, an engineer builds it, and QA validates it. Each step waits for the previous one to finish, and each handoff carries a queue. This structure made sense when it was built because code was genuinely expensive to produce. Every change had to flow through the one function that could produce it. Everything before coding was prep work, meant to ensure engineers didn't have to recode anything once they got started.

That assumption is now outdated. Agents can produce working code from a prompt, and the cost of generating a first implementation has dropped close to zero. The question is no longer whether your team can afford to code something; it's who gets to write the prompt.

When only developers interact with agents, the sequential structure stays intact. Designers file redlines and wait for engineers to interpret them. PMs write specs that sit in sprint backlogs. QA waits until something is nearly finished before testing it. Engineers field clarification questions that interrupt their focus. Making the coding step faster doesn't change any of that. The workflow moves quickly in one narrow lane and at the same pace everywhere else.

This is why delivery metrics stay flat for most organizations after AI adoption. Individual velocity improved. The handoffs didn't.

When every role can interact directly with agents, the sequential structure begins to collapse. A designer can refine spacing and interactions directly in code without having to file a redline. A PM can turn a ticket into a working prototype without opening a Jira comment thread. QA can reproduce a bug, prompt a fix, and verify it in the same session. None of that work needs to touch an engineer until it's already been reviewed and validated by the people who would have generated rework cycles anyway.

The mechanics of this shift are worth walking through concretely, because the abstract version undersells how much it changes the actual experience of building software.

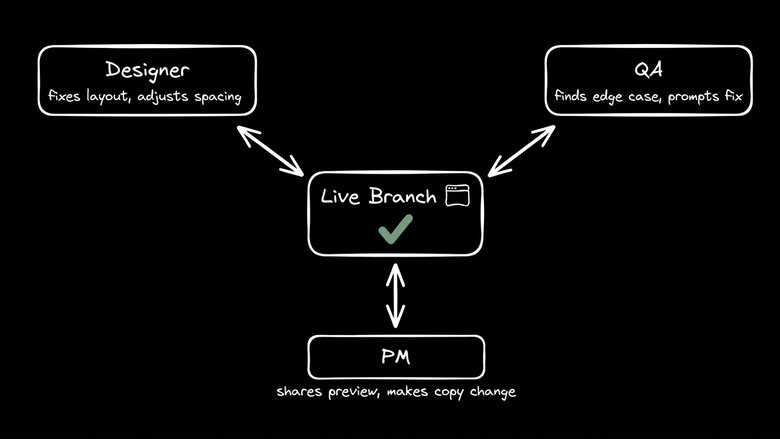

A product idea comes in. A PM kicks off an agent on the real codebase, gets a working implementation, and shares a preview link with the team. There's no spec document. There's no ticket waiting in a sprint backlog. There's a live branch with running code that anyone on the team can open in a browser. From there, the work moves in parallel:

- A designer opens the branch in a visual editor, fixes the layout, adjusts component spacing, and confirms the interaction behavior matches what was intended.

- QA steps through the flows, finds an edge case, and prompts a fix.

- The PM shares the preview with a customer, collects feedback, and makes a copy change on the spot.

By the time the branch reaches an engineer for final review, it has already been through design QA, functional testing, and a real-user feedback loop. The engineer reviews code, approves what ships, and moves on. They never opened a redline document. They never responded to a Slack message asking them to clarify a spec. They never fixed a spacing issue that a designer could have handled in thirty seconds with the right tool.

This is what multiplayer AI development actually means in practice. Every role moves work forward in the medium they understand. A designer who spots a spacing problem fixes it in the visual editor. A PM who has a copy change makes it directly in the branch. QA who finds an edge case prompts the fix and verifies it on the spot. None of those work routes through engineering.

None of this works if agents are generating generic code. A PM who prompts a change and gets back output that ignores your component library or overrides your design tokens hasn't saved anyone time. The work still lands on engineers, just in a worse form than if the engineer had built it from scratch.

The precondition for everything described above is context. Agents need to know your real system: your components, your tokens, your architectural patterns, and the reasoning behind decisions your team has already made. Builder indexes your codebase directly, reads your Figma component maps, and builds a model of how your design system actually works, not an interpretation of what it looks like, but a full understanding of the relationships between components, tokens, and patterns. When that context is in place, AI output matches your codebase from the first generation. Designers can refine it without having to deal with foreign component names. QA can test it against real behavior. Engineers can approve it without rewriting it first.

Context also shapes the feedback loop. When a PM builds a working prototype using your actual design system, stakeholder and customer feedback focus on something that looks and behaves like your real product. When a designer makes a refinement in a live branch, the refinement that goes to review is the actual change, not an approximation that an engineer would need to interpret. Every step that uses real context produces outputs that don't require translation before the next step can begin.

This is the mechanism that collapses the handoff cost. Every role can participate without creating downstream cleanup work for the people who come after them. It's why teams that try to stitch together disconnected tools — a coding agent here, a design handoff tool there — still end up with the same queues they started with. Integrated context across the full workflow is what makes the difference.

There's a version of this that sounds threatening to engineering teams, and it's worth addressing directly. Giving non-engineers the ability to write to a codebase raises legitimate questions about code quality, adherence to the design system, and what happens to standards when people who don't fully understand the system start making changes.

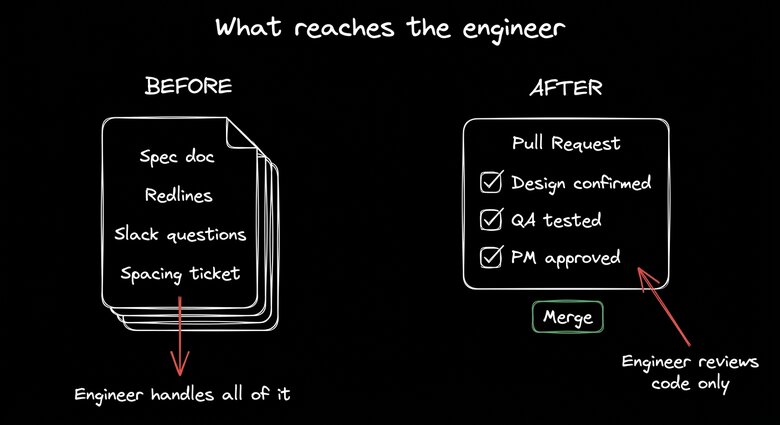

Engineers don't lose control in this model; they gain a better-defined scope of what that control actually means. Engineers retain merge authority. Nothing ships without their review. What changes is what the review contains by the time it reaches them.

When every role contributes through a workflow with structured approval stages, engineers receive pull requests that have already been reviewed by the people with the most context on what the change was supposed to do. The designer confirmed it looks right. The PM confirmed it behaves correctly. QA confirmed it doesn't break anything obvious. The engineer reviews the code itself, not its intent. That's a significantly smaller and more valuable scope of work than reviewing everything from scratch while also fielding questions about what the spec actually meant.

Senior engineers didn't become senior engineers because they're good at moving buttons. They became senior engineers because they're good at making hard technical decisions, maintaining system integrity under pressure, and spotting the kinds of problems that only become visible at scale. A workflow that keeps their attention on those problems and routes everything else to the people better positioned to handle it is a better use of their time. Teams that have made this shift describe engineers finally focusing on architecture and hard problems rather than translating specs into pixels.

Organizations that adopt this model tend to describe the experience the same way: they stop feeling like engineering is the bottleneck and start feeling like the whole team is building together.

Features move from idea to production faster because the feedback loop starts earlier and runs in parallel. Fewer changes require rework at the end because each step is validated in context by the people with the most relevant expertise. Engineers spend more of their time on work that's genuinely hard and genuinely interesting, which matters for retention and for the quality of what they build.

The gap between AI's promise and delivery narrows as the workflow finally matches its capabilities. AI made code generation fast. Taking advantage of that requires redesigning the workflow around it. When every role can drive agents, prompt changes, and move work forward without waiting for someone else's queue to clear, the full pipeline becomes fast, not just one step in it. The path from prototype to production shortens because validation occurs continuously rather than at the end.

Every enterprise has the same graveyard of failed AI POCs. They promised speed. They delivered rework. The difference between those projects and the ones that actually change delivery throughput is almost always the same: whether AI was given to one function or built into how the whole team works together.

The handoff era isn't fading; it's over.

If your team has adopted AI tools and delivery timelines haven't moved, the workflow is the problem. Builder puts agents in the hands of your entire product team, connected to your real codebase, design system, and existing review process.