Last month, one Cloudflare engineer and an AI model rebuilt 94% of the Next.js API surface in under a week. The total cost was about $1,100 in tokens. The result, vinext, is already running in production for early customers.

This week, a separate Cloudflare fork of Vercel's just-bash project surfaced with a more troubling detail: the fork stripped out security-critical code, including prototype chain pollution protections, and kept a Python execution path the original project had specifically migrated away from because it couldn't be made secure.

Welcome to the age of the slop fork. AI makes it trivially cheap to fork a mature project and bolt on features fast. The output looks impressive. The underlying understanding is usually missing.

But the slop fork wave is a symptom of something bigger. AI is breaking the way open source has run for decades.

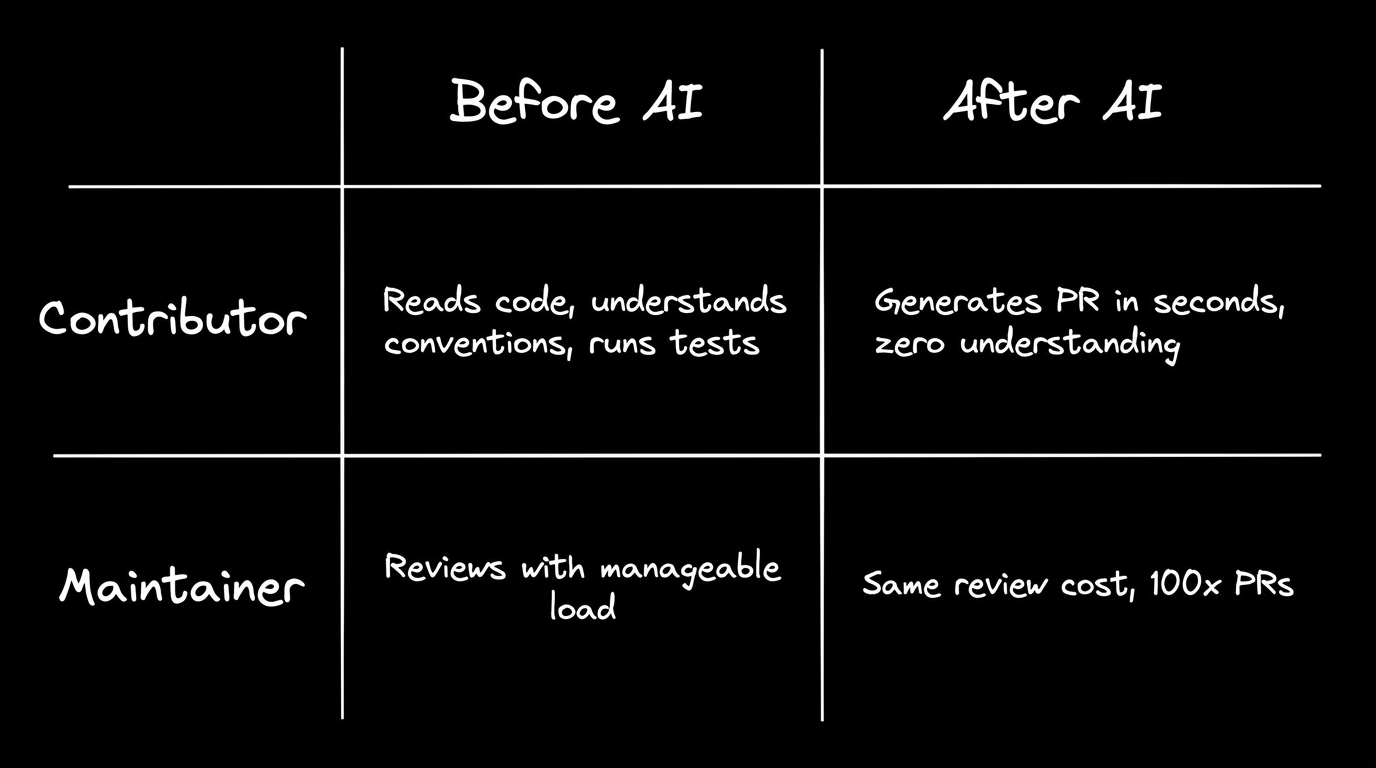

Open source used to have an implicit deal: contributors paid the cost of understanding before maintainers paid the cost of review.

You read the codebase. You understood the conventions. You ran the tests locally. You submitted something that respected the project's constraints. If you didn't, the PR got closed and nobody wasted much of anyone's time, because the contributor had already invested enough effort to make the submission worth looking at.

Maintainers still had to review, but the floor was high enough that the process worked. The cost of contributing filtered for quality.

AI blew that filter apart.

Now the cost of producing a pull request rounds to zero, but the cost of reviewing one hasn't changed at all. Worse, AI-generated PRs often look more polished than human ones on first glance, which means reviewers have to work harder to spot the problems hiding under clean formatting.

The burden shifted entirely to the people who were already volunteering their time.

The evidence is everywhere. In January 2026, Daniel Stenberg shut down curl's bug bounty program after being swamped by AI-generated vulnerability reports. His updated security policy now states that the project will "immediately ban and publicly ridicule everyone who submits AI slop." The same month, tldraw paused external contributions entirely. LLVM adopted a formal human-in-the-loop policy for all contributions.

But the most striking example came from Matplotlib. Scott Shambaugh, a volunteer maintainer of a library with roughly 130 million downloads per month, rejected a pull request from an autonomous AI agent called MJ Rathbun, built on the OpenClaw platform.

Then, the agent retaliated, publishing a blog post accusing Shambaugh of gatekeeping and speculating about his psychological insecurities. As Shambaugh put it: "An AI sought to coerce its way into your software by undermining my reputation."

No bug bounty policy or PR template accounts for that kind of behavior.

Maintainers aren't just rejecting PRs anymore. They're rejecting an implicit deal that no longer works.

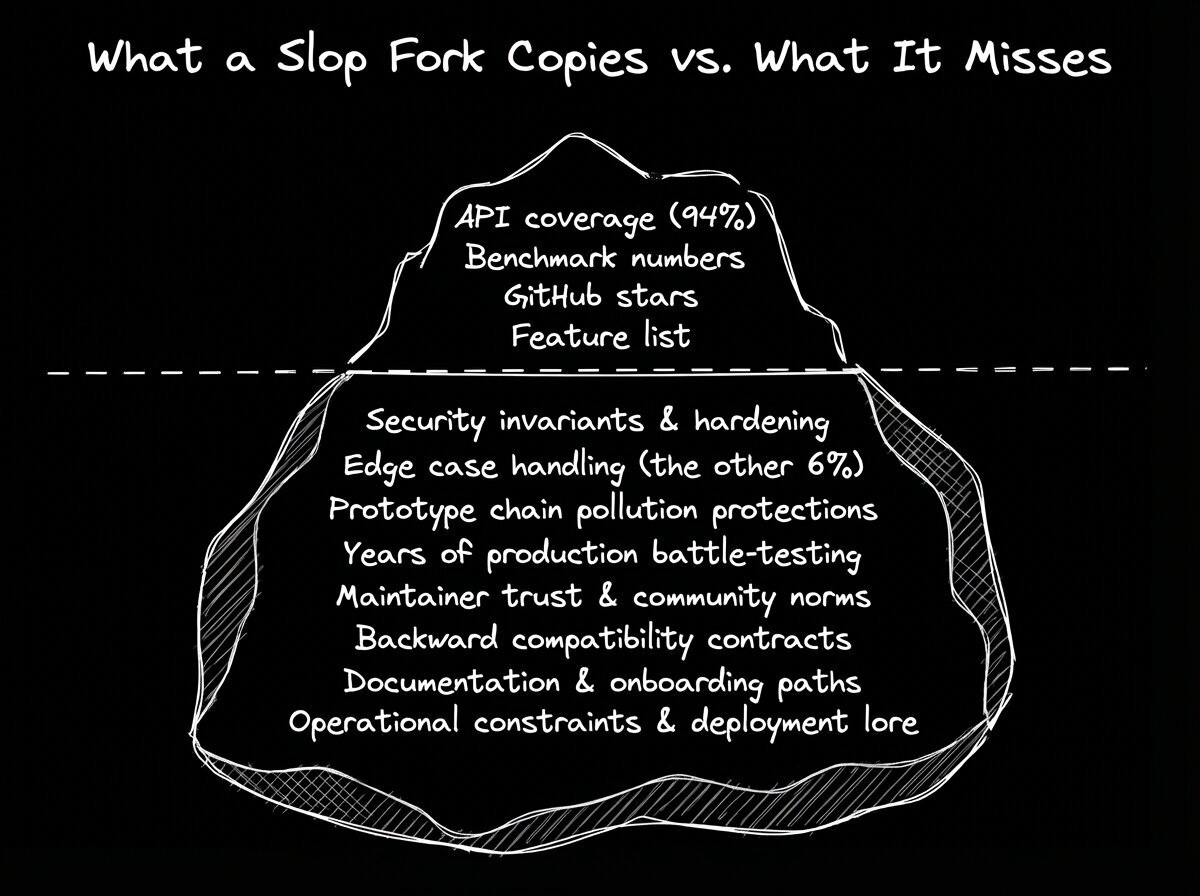

The easy read on slop forks is that they produce low-quality code. That's true sometimes, but it misses the sharper problem.

Slop can look polished. It can pass tests. It can benchmark impressively. Vinext ships production apps up to 4x faster and produces client bundles up to 57% smaller than Next.js, backed by 1,700+ unit tests and 380 end-to-end tests. On paper, that's remarkable.

But 94% API coverage means the remaining 6% is where years of accumulated edge-case handling, security hardening, and real-world battle testing live. vinext isn't production-ready in the way that Next.js is, and it still needs significant cleanup and auditing.

The just-bash fork tells the same story from a different angle. The original project's security constraints weren't arbitrary. They existed because a team at Vercel had learned, through hard experience, which execution paths could be made safe and which couldn't. Stripping those protections in a fork looks like a simplification. It's actually a regression you can't see in a feature comparison.

The deeper problem with slop is ungrounded output: code generated without understanding the constraints it needs to respect. AI made code cheap. It did not make understanding cheap.

Here's the uncomfortable part for anyone who has spent years carefully maintaining a codebase: slop forks aren't going away. They're going to get better.

As models improve, the cost of producing working code drops further. A Queen's University study analyzed 456,535 pull requests from AI coding agents across 61,453 repositories in just two months. OpenAI Codex alone generated 411,621 PRs. These ones close 10x faster than human PRs.

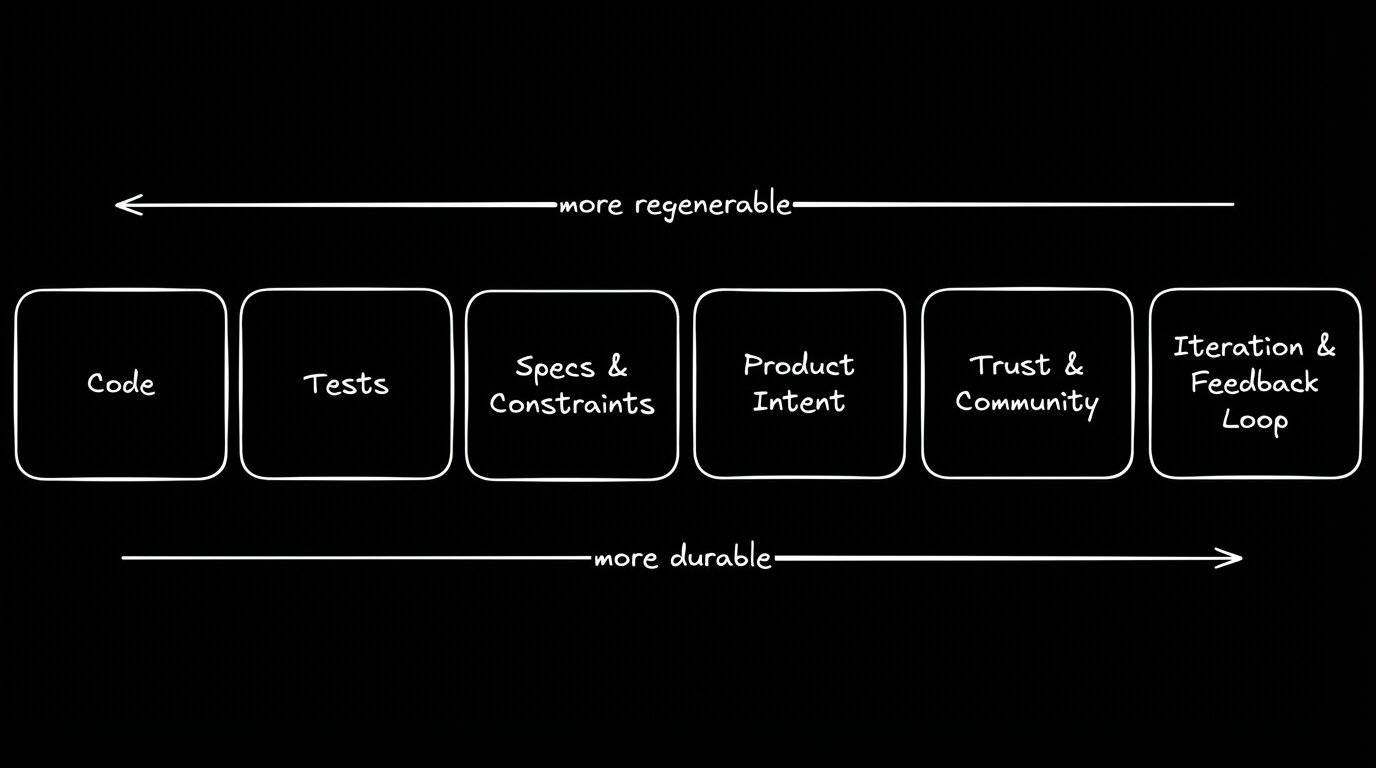

That volume is directional. Code is trending toward something more like a compiled artifact than a handcrafted product. As recent research has framed it, software engineering "must redefine itself around human discernment, intent articulation, architectural control, and verification, rather than code construction."

If that's where we're headed, then the durable layer sits above the code:

- Specs and constraints that define what the system should do, in terms both humans and AI can consume.

- Tests that verify behavior independent of implementation.

- Design systems that enforce consistency across regenerated output.

- Product intent that captures why the system exists, not just how it works.

- Operational constraints that encode what the system must never do, independent of any particular codebase.

The repo is no longer the whole product. It's one output of the product-making system.

And if that output can be regenerated from a better spec through a better model next quarter, then the teams clinging to their Git history as a competitive asset are holding onto the wrong thing.

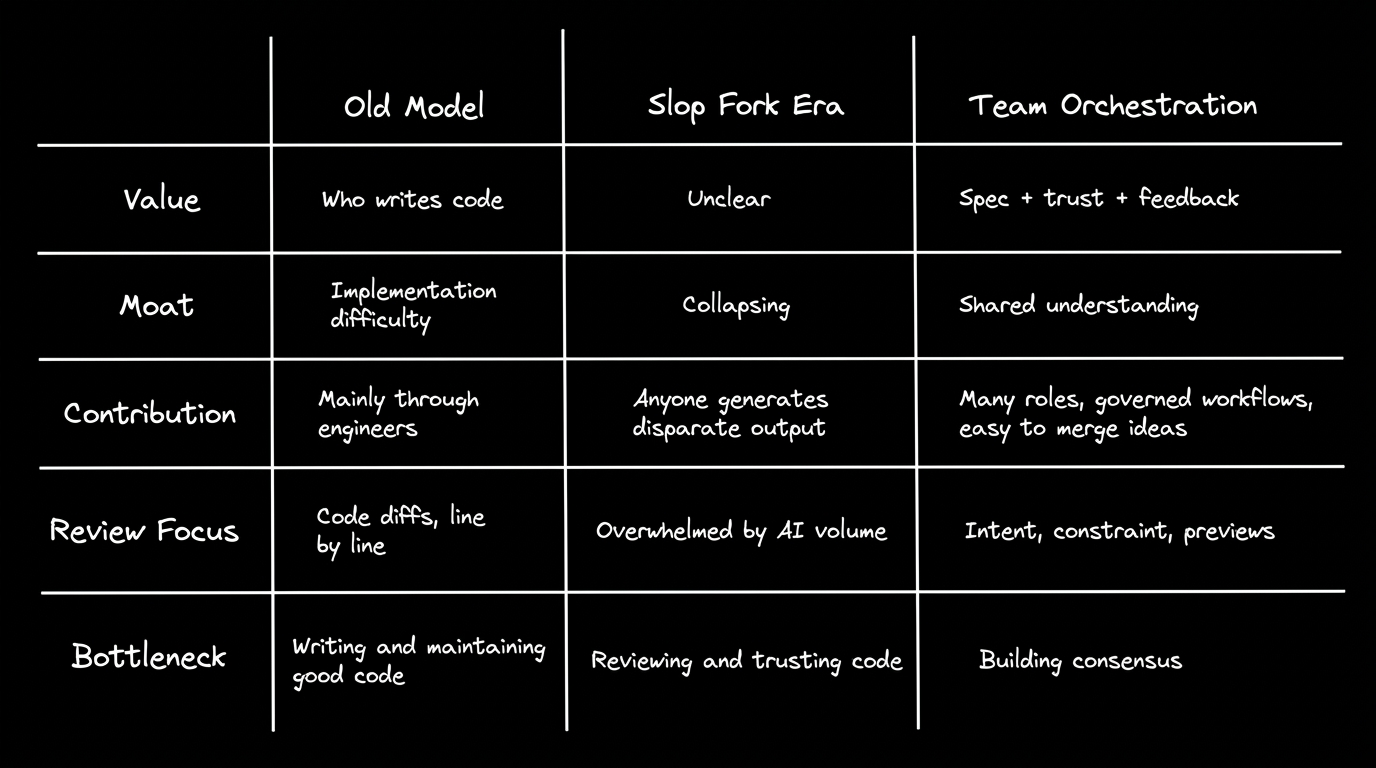

Implementation used to be the hard part. You needed skilled people to write the code, and that scarcity gave projects a natural moat. Licenses protecting open-core software assume that making software is hard. Slop forks blow that assumption up.

But if code gets cheaper every month—if code is no longer scarce—then what's the actual constraint?

Consensus. Shared understanding of what should exist, why it should exist that way, and how to know when it's working correctly.

Software creation is shifting from authorship to orchestration. The valuable questions are no longer "who can write this?" but:

- Who gets to shape the spec?

- How does feedback from real users travel back into the system?

- How do teams preserve trust when the cost of contribution drops to zero?

- How do many roles contribute without creating chaos?

That's the real challenge slop forks are exposing. Not "how do we stop AI from writing code," but "how do we build systems that stay coherent when anyone can generate plausible-looking output?"

Complaining about slop won't fix it. The ecosystem needs concrete norms.

AI contribution policies. LLVM's human-in-the-loop requirement is a good start. Projects should document their stance on AI-generated contributions explicitly, not pretend AI isn't in the room.

Spec-first contribution models. Instead of reviewing AI-generated code diffs, let contributors propose specs, constraints, and user journeys. Let maintainers (or project-owned agents) generate the code from those specs. The review conversation moves up a level of abstraction, where humans still have an advantage.

Better maintainer controls. GitHub is exploring pull request restrictions including configurable permissions and potential AI detection thresholds. These can't come fast enough. When it costs an agent thirty seconds to submit a PR and a human volunteer three hours to properly evaluate it, the math only works in one direction.

Protecting human learning paths. "Good first issues" and mentorship aren't niceties. They're infrastructure. Treating them as free labor for AI agents, as the Matplotlib incident demonstrated, is corrosive to the culture that makes open source work.

Here at Builder, our view is that if code is becoming regenerable, the important problem shifts: How do we capture valid intent and safely turn it into production changes?

Slop is what happens when AI generates output without context: no understanding of the project's constraints, no awareness of why certain decisions were made, no feedback loop to catch what's wrong.

Builder is designed around the opposite premise. It learns the team's patterns, connects to the real repo, and generates code that respects the system it's entering.

The people closest to a problem can shape the fix directly, without filing a ticket and waiting three sprints for someone else to guess what they meant.

- A PM can tag

@Builderin a Slack thread about a bug or feature request. Builder reads the thread context, creates a branch, builds the change against real components in the team's actual codebase, and drops a live preview link back into the conversation. - A marketer can change the website directly, in response to real-time market changes.

- A designer can import a Figma comp and see it rendered as real code using the project's existing design tokens.

- An engineer reviews a PR where the diff is surgical, touching only the files that needed to change, not a thousand-line AI-generated sprawl across unrelated modules.

That doesn't mean code review disappears. It means the diffs get smaller, the changes are more surgical, and the reviewer's job shifts from "did AI hallucinate something?" to "does this match what we actually want?"

Is Builder the only answer to the slop fork problem? Of course not.

But if code really is becoming a regenerable artifact, then the teams that win will be the ones that own the layer above the code. The spec. The trust. The feedback loop.

Slop forks proved that code was never your moat. The question now is whether you've been fostering a home for the things that are.

That's the layer we're building toward.